“Lola v. Skadden and the Automation of the Legal Profession” from the Yale Journal of Law & Technology, written by Michael Simon, Alvin Lindsay, Loly Sosa, and Paige Comparato, is an epic take on legal disruption from forward-leaning legal professionals. The article outlines a recent decision that contains an incantation of doom for the definitions of “legal work.”

In this article, we’ll summarize the article, reveal the facts of the Lola v. Skadden case, unpack the constructs (both literal and psychological) that hold the legal industry back from innovation, and define what the authors believe are the next steps possible for lawyers. The legal profession finds itself standing on a precipice, and to make a lasting change, lawyers might need a leap of faith.

The legal field has protected itself from disruptions by monopolizing legal work and strictly defining what the “practice of law” entails. ABA Professional Rule 5.4 bars non-lawyers from investing in or running law firms, keeping the practice of law under the purview of lawyers. The “practice of law” is a unique type of labor, one that includes independent thought and judgment. So unique, in fact, is the labor of lawyering that under the Fair Labor Standards Act, lawyers cannot claim overtime for their work. It’s that special!

If lawyering is supposed to be so special and full of independent judicial thought, the job of temporary document reviewer is one of the most loathsome positions in the legal world.

There is a wellspring of complaints and commiseration across the online legal spectrum, lambasting the rote tasks associated with ladder-ascending doldrum work like document review. While some lawyers are content with blogging or tweeting, other lawyers take action and sue the law firms that they work for for the overtime that lawyers can’t get because law is so “special.” Enter complainer, human reviewer of computer-reviewed documents, and unwitting destroyer of legal careers, Dave Lola.

Lola, a low-level associate lawyer at Skadden in 2012 who hated doing doc review (that a computer had already checked), sued his law firm for overtime, saying the work wasn’t “legal work” worthy of the title of someone who practices law. Lola described the document review gig as “exploitive.”

The defendants argued that document review is a core function of an attorney’s career, while Lola’s team countered that the work did not amount to practicing law because it was mechanical and involved no independent legal discretion. So the courts, after an appeal, decided that document review that can be done by computers does not qualify as the “practice of law.”

“The parties themselves agreed at an oral argument that an individual who, in the course of reviewing discovery documents, undertakes tasks that could otherwise be performed entirely by a machine cannot be said to engage in the practice of law.”

Lola complained that his work wasn’t special enough – and the courts agreed. That means he unwittingly carved out an opening for disruption. State bar associations have strict regulations against “non-lawyers” running law firms or helping in any way with legal work. The unauthorized practice of law is a serious offense. This has protected the super special work of low-level attorneys from disruption by “non-lawyers” or companies pushing tech solutions to firms. Until now.

All that special work that once was the foundation of a legal career is going to the robots, and lawyers who used to complain about it are going to have new complaints while they wait for an interview for assistant manager at their local Best Buy.

The craziest part of this story: Lola ended up winning his case, got paid a small settlement, but was unable to find any more work as a contract reviewer. He felt he was blackballed by the industry. Unable to pay his bills, Lola was forced to live in his car, lost his marriage during the court battle, and left the practice of law to build houses. The guy who hated his job so much he burnt it down isn’t even a lawyer anymore.

Why is legal ‘disruption’ different this time?

In the past, the legal industry has had time to carefully consider adopting tech innovations. Because of Lola, and the changing definition of “legal work,” all that has changed. As tech exponentially increases and invades the legal practice, there is an urgency for lawyers to differentiate their human contributions from machine-led tasks.

Rule-based computing was the rage until its limits caught up with it in the 1980s. Between 1980 and the late 1990s, artificial intelligence (AI) hit a dark period of innovation because of the lack of adequate processing speed. Once parallel computing was discovered in the late ’90s, AI and processing power exploded and the innovation to machine learning and AI was back on.

Lawyers coming of age and building careers in the ’80s and ’90s were told that computers would be taking their jobs since the late ’60s with no result. Now those lawyers are running firms and see no direct threat or reason to invest in innovation, based on the historical precedent.

But with precedence-shattering innovations happening in processing speed, software, and hard drive space, automation within the legal profession is an inevitability that can either be handled by lawyers or taken out of their hands.

Challenges of the legal profession

Law is a self-preserving, self-regulated monopoly that has enjoyed immunity from outside challenges, especially from “non-lawyers.” With the state bar associations shielding the industry from competition, economic experts have calculated the estimated earnings premium created by regulations. The most recent numbers, from 2004, estimate that the earnings premium is $64 billion. Thatamount represented $71,000 per each then-practicing attorney. No wonder lawyers are hesitant to open the source code of law.

The unauthorized practice of law (UPL) has been the most effective tool to enforce the regulations of the legal industry, but the decision in Lola challenges this practice. By deciding that “tasks that could otherwise be performed entirely by a machine cannot be said to engage in the practice of law,” the logical correlation is that once a task can be performed entirely by a machine, that task is no longer considered “the practice of law.” Thus, if a software company can design a program that can handle entire tasks in the law firm, they might be able to remove that work from the firm, but is that considered UPL?

Partner-model leans toward safety and short-term results

The way law firms are designed is holding back innovation. The partnership model for most law firms creates a strong incentive to maximize present gains and disincentives to invest in the future. Law firms close their books out at the end of each year, report quarterly and set timeframes and incentives that prioritize short-term gains. Paired with the ineffective tech revolutions of the past, lawyers see no reason to change.

Psychological makeup of legal industry prevents innovation

Not only do structural barriers stifle innovation in the legal profession, but the legal profession itself attracts people with psychological temperaments averse to the radical shifts in behavior needed to evolve the practice of law. Dr. Larry Richard has spent the last 40 years studying the legal profession and psychology and has discovered lawyers outpace the baseline population in skepticism and pessimism. When you consider what lawyers do for a living, these traits can be seen as key components to critical thinking. The traits that make lawyers good at their jobs can hinder them when it comes to innovation in the field.

According to Dr. Richard, lawyers also score abnormally low on “resilience,” the ability to bounce back from failure or criticisms. Lawyers who don’t like to fail will have a hard time adapting to the “fail-fast” world of tech innovation.

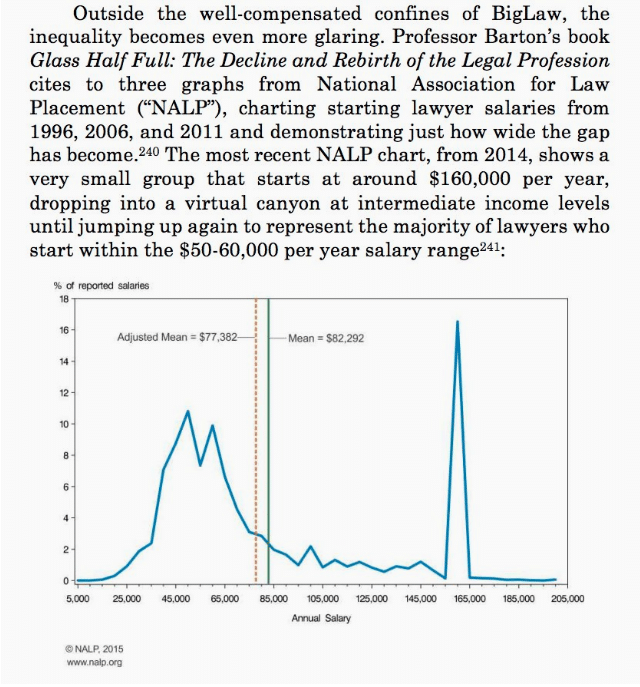

The winner-takes-all economy of Silicon Valley behemoths is also a threat to legal innovation. Across industries, there is a trend of larger portions of wealth being sucked up by smaller percentages of individuals. Average AmLaw 100-200 profits in 1987 = $324k. $1.6m in 2017.

The salary distribution in the legal profession is far from even.

Visions of the future

When talking about the dreary future of e-discovery work, displacement is already happening. Thanks to legal procurement and other document-review software vendors, the number of reviewers per project went from 32 in 2010 to 11 in 2014. And since machines are taking a greater role in culling and undertaking initial analysis of documents, the amount of docs that need human review is dropping dramatically as well.

But isn’t this the work that Lola was complaining about doing anyway? And if that corporate ladder to climb is disappearing, who wants to do the grunt work anyway? With more lawyers flooding the marketplace and fewer jobs popping up, what’s the answer?

According to Ron Dolin, a research fellow at Harvard Law School, “At some point, document review and due diligence won’t be about dozens of humans looking at millions of documents. . . it’ll be about getting a handful of people to run the software.” For the lawyers who can partner with engineers to ensure that these systems run correctly, the time is now.

The required investments to develop better efficiencies within a law firm don’t produce higher profits this year or next. Instead, the payoff is long-term relevance and survival.

Staving off doom will require a conversational shift within the legal community. This future won’t be a utopian fantasy world, nor will it be Terminator. The profession needs to explore how lawyers can use tech to augment their efficacy and skill sets to differentiate their human contributions from machine-led tasks.

In their book, Only Humans Need Apply, Thomas Davenport and Julia Kirby propose seven roles in which humans can provide needed value in working with machines. These roles will be transferable across all disrupted industries, especially law.

- Design and create the machine’s thinking.

- Provide “big picture” perspective.

- Integrate and synthesize across multiple systems and results.

- Test and monitor.

- Know how to best apply the system.

- Elicit the necessary info.

- Persuade humans to take action on automated recommendations, because no matter how smart our machines become and no matter how good the advice they provide, it is ultimately humans who have to take – or not take – the actual actions that follow.

The lawyer who can wield a variety of tools to more efficiently analyze trends and other important factors, rather than just performing word searches on subscription databases, will be the one who clients hire. Instead of wondering “why innovate?” lawyers should wonder “why not?” If the law is a client-service industry, why not strive for the next level of service?

Firms should engage with their different practice groups and attorney levels, along with technical, operational, and administrative staff, in order to understand which tasks could be accomplished through automation.

In a much more discerning world, the law firms that will be successful will be the ones that can offer clients something they are not going to find at 10 other firms doing essentially the same thing. The strategy of simply keeping up with the pack misses the point that most of the pack is itself lagging.

A future full of machine reviewed data is great, but it also opens up a can of worms of unintended consequences and new opportunities for errors. Lawyers must fill the gaps between mechanical outputs and societal realities. And it is only those lawyers who can effectively leverage AI in the law who will avoid a future of deeply flawed and error-filled legal services.

Doctors have embraced working alongside AI to the benefit of patients. Large swaths of their responsibilities are now run by software and DRS monitoring systems area feature of their role, and they embrace it.

Lawyers will need to oversee, control, review, and analyze AI outputs. Without the knowledge and ethical oversight from lawyers, society will have to blindly trust the algorithms. Algorithms currently used in parole hearings, accurately predicted recidivism only 22% of the time. Worse, the algorithms work with racial-biases in the input data sets, flagging black defendants as potential repeat offenders nearly twice as often as white ones.

Even worse, the judges and lawyers involved in these cases had no idea how these algorithms worked, and they had no chance to find out because the companies that created these systems lept the info proprietary. Thus, lawyers will need the skills to either challenge these systems or argue for their use.

Without lawyers to inject accountability, we risk a future of a thoughtless practice of law that would leave the profession and society poorer for the attempt.

AI can think better, faster, cheaper. But can AI reassure a client? Can it tell what a client REALLY needs? Can it think of creative solutions? Can AI sense what is fair? Beyond rules, can it obtain wisdom? Does it know how far to bend rules – or break them – to attain justice?

So the question of tech disrupting law isn’t a “when” question, it’s a “how and by how much” question. The legal profession needs to stop relying on the armor that has protected it in the past, overcome the fear of tech, and find the means to wield it to society’s benefit.

WANT MORE?

If you want more info on legal marketing, sign up for the Consultwebs newsletter, follow us on social, and subscribe to the LAWsome Podcast.